From Chaos to Ritual

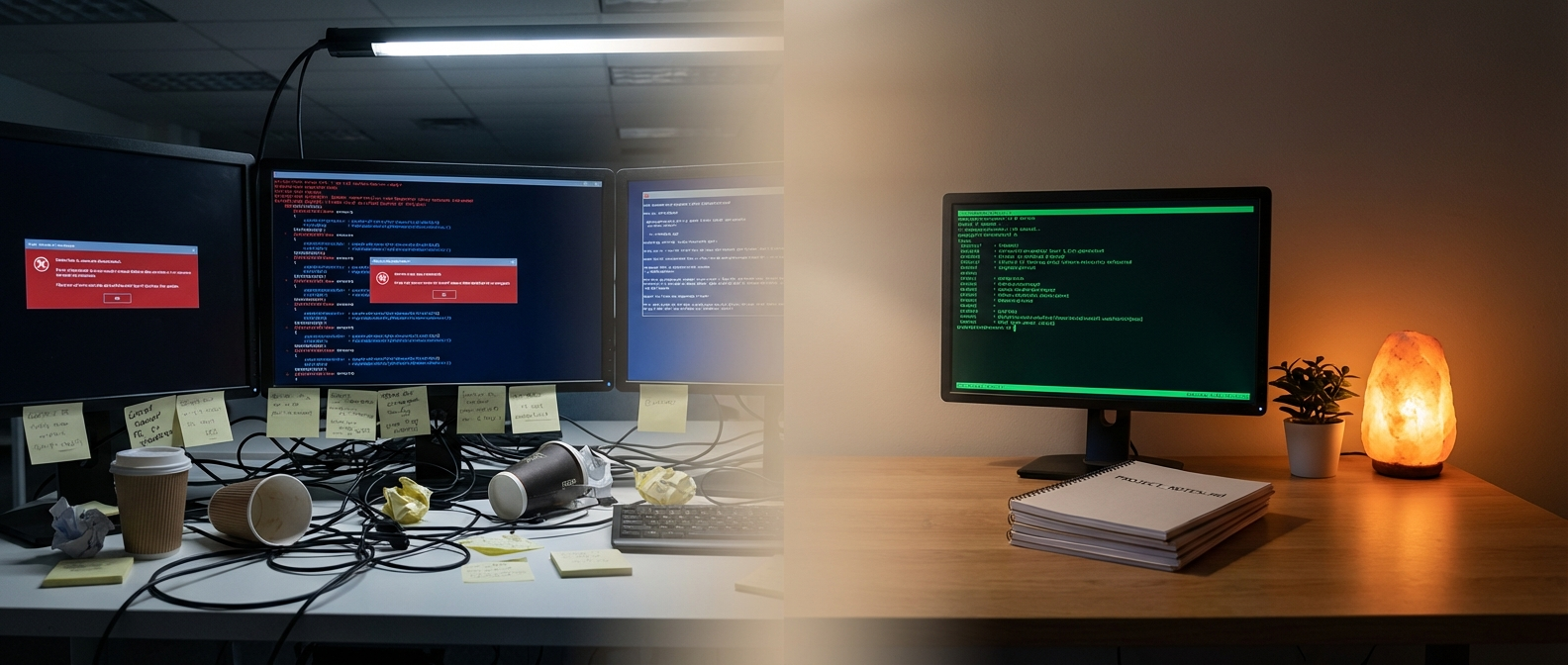

In the last post, I described how AI went from answering questions to running autonomous workflows. The brain got arms. MCP gave it access to tools. CLAUDE.md gave it project knowledge. Skills gave it expertise. The progression was fast, exhilarating, and, I need to be honest, messy.

Because here’s what I didn’t tell you: for every time the AI nailed a task perfectly, there were three times it went sideways. Same prompt, different day, completely different result. One session it would follow my architectural conventions flawlessly. The next session it would ignore everything and restructure the entire module hierarchy because it “thought it would be cleaner.”

The AI had capability. What it didn’t have was consistency.

The Consistency Problem

This is the part nobody talks about in the hype cycle. Yes, the AI can write code. Yes, it can plan, test, commit. But can it do the same thing the same way twice? Not really.

Every new conversation started from zero. The AI had no memory of what we decided yesterday. No recollection of the dead end we explored last Tuesday. No awareness that we already tried that approach and it didn’t work.

I found myself repeating the same instructions. “Remember, we use hexagonal architecture.” “Don’t touch the domain layer directly.” “Run the tests before committing.” Over and over, every session.

It was like onboarding a new developer every morning. Except this developer forgot everything at 6 PM.

The capability was there. The continuity wasn’t.

The Framework That Changed Everything

Then I found GSD. Get Shit Done.

The name is deliberately anti-corporate. Its creator built it because every other spec-driven framework felt over-engineered. Sprint ceremonies, story points, stakeholder syncs. All the rituals that simulate professionalism without actually moving things forward. Enterprise Theater, as the author calls it.

GSD replaces all of that with a handful of markdown files.

- PROJECT.md: The project vision. Always loaded. The AI reads this first, every time.

- ROADMAP.md: Phases and progress. Where are we? What’s next?

- STATE.md: The living memory. Decisions made, blockers hit, current position. This file survives between sessions.

- PLAN.md: The current task, broken into atomic steps with explicit verification criteria.

That’s it. Four documents. No Jira. No sprints. No stand-ups.

The first time I set this up and started a new session, something was immediately different. The AI didn’t ask me to re-explain the project. It didn’t suggest approaches we’d already rejected. It picked up exactly where we left off.

Because STATE.md told it where we were. ROADMAP.md told it where we were going. PROJECT.md told it why.

Session Memory

The STATE.md file deserves its own section, because it solved a problem I didn’t know how to articulate.

When you work with an AI agent, every conversation has a context window. A limited amount of text the model can hold in its working memory. Start a new conversation, and that memory is gone. Everything you built together, the reasoning, the decisions, the rejected alternatives, evaporates.

STATE.md is the antidote. After each session, the important bits get captured: what was decided, what’s blocked, what’s next. When the next session starts, STATE.md gets loaded and the AI has continuity.

It’s not perfect memory. It’s curated memory. The highlights, not the transcript. And that turns out to be more useful, because it focuses the AI on what matters rather than drowning it in everything that happened.

I think of it like a ship’s log. You don’t record every wave. You record course changes, storms weathered, and ports ahead.

The GSD framework calls this “Session Memory Architecture.” I call it the thing that turned an amnesiac assistant into a colleague who remembers.

Documentation Before the Work

Here’s where it gets philosophically interesting.

In the old world (and by “old world” I mean 2024) documentation was something you wrote after the work was done. If you wrote it at all. Most developers treat documentation like flossing: everyone agrees it’s important, nobody does it regularly.

With GSD, the documentation comes first. You write the PROJECT.md before any code. You define the ROADMAP before the first commit. You update STATE.md before the session ends.

Documentation before the work. Not after.

This felt backwards at first. Bureaucratic, even. I’m a developer, not a technical writer. Just let me code.

But then I noticed something.

The Paradox

The documentation wasn’t really for the AI. Not entirely.

Yes, the AI reads it. Yes, it uses it to make better decisions. But the real beneficiary was me.

Writing a clear project vision forced me to think about what I actually wanted. Defining phases in a roadmap made me confront which parts of the project were well-understood and which were hand-wavy. Capturing decisions in STATE.md made me realize how many decisions I was making unconsciously. And how many of them were contradictory.

There’s an old truism in education: teaching is the best way to learn. When you explain something to someone else, you discover the gaps in your own understanding. The act of articulating forces clarity.

Writing for the machine does the same thing. Except the machine is even more demanding than a student. A student can fill in gaps with common sense. The AI takes your words literally. If your instructions are ambiguous, the output will be ambiguous. If your architecture description has contradictions, the AI will cheerfully implement both sides.

So you learn to be precise. Not because you want to. Because the machine gives you no choice.

Teaching is the best way to learn. Prompting is the best way to think.

Anne-Laure Le Cunff wrote about this: “Writing is one of the few tools that reliably changes how you think. It forces commitment to a line of thought and turns half-formed ideas into examinable content.” She was talking about writing as a general thinking tool. But the same mechanism applies to writing CLAUDE.md files, project definitions, skill instructions. Every specification you write for the machine is a specification you write for your own understanding.

Martin Fowler flagged a related concern at a Thoughtworks Open Space: pair programming has an underappreciated benefit beyond code quality: the act of explaining code to a pair partner is itself a learning mechanism. When AI writes code instead of human pairs, developers lose that forced articulation step.

But here’s what I think Fowler missed: if you’re doing it right, the articulation doesn’t disappear. It moves. You’re no longer explaining code to a human partner line by line. You’re explaining intent to a machine in structured documents. The thinking still happens. It just happens before the code, not during.

It’s a bit like writing a diary. You don’t write a diary for someone else to read. You write it to understand what you’re actually thinking. Prompting, done seriously, works the same way.

Skills as Process Distillates

In the last post, I described skills as reusable instruction sets. Job descriptions for the AI. That’s accurate but incomplete.

After months of building and refining them, I realized skills are something more specific. They’re process distillates.

Think about how expertise works in the real world. A senior developer doesn’t just know facts. They have internalized processes. When they see a bug report, they don’t think “what should I do?” They follow a pattern. Read the error. Reproduce locally. Check recent changes. Write a failing test. Fix it. Verify.

That sequence isn’t written down anywhere. It lives in the developer’s head, refined through years of practice. It’s tacit knowledge.

A skill makes that tacit knowledge explicit. You take a process that exists only in your mind and write it down in enough detail that a machine can follow it. Every decision point, every fallback, every quality check.

The first few skills I wrote were clumsy. Too rigid in some places, too vague in others. But as I iterated, something interesting happened: the skills became mirrors of my own workflows. Refined, clarified, and, critically, reproducible.

And here’s the thing: you don’t have to write them alone. In fact, you shouldn’t. The best skills, and the best CLAUDE.md files, emerge when you write them together with the AI. You describe your process. The AI asks clarifying questions. You realize you skipped a step. The AI drafts a version. You refine it. It’s a conversation that produces a document, and the document is better than what either of you would have written alone.

The AI running a skill produces more consistent output. Not identical, and not deterministic. But recognizably consistent. Same structure, same approach, same quality bar. More often than not.

That shift changed the emotional texture of working with AI. It went from exciting but unpredictable to something you could rely on. From gambling to something closer to engineering.

The Ritual

Gene Kim, who wrote The Unicorn Project and researched what makes software teams effective, identified psychological safety as one of the five ideals of healthy engineering culture. Not just cultural safety, not just “it’s OK to make mistakes,” but technical safety: small, reversible changes. Rollbacks. Testing before deploying.

GSD provides exactly this for AI-assisted development. The exploration phase before the plan phase. The verification steps before the commit. The atomic tasks with explicit done criteria. Each step is small, reversible, and verifiable.

It’s a ritual. And rituals, in the best sense of the word, create safety.

Before GSD, every AI session was an adventure. Sometimes brilliant, sometimes catastrophic. I never knew which one I’d get. The uncertainty was exhausting.

After GSD, every session follows the same structure. Start. Read STATE.md. Understand position. Plan. Execute. Verify. Update STATE.md. End. The same sequence, every time.

The AI interactions went from chaotic and unpredictable to structured and reproducible. Skills made them repeatable. GSD made them safe.

That shift wasn’t just technical. It was psychological. The anxiety of “will the AI mess up my codebase?” was replaced by the confidence of “even if something goes wrong, we can revert to the last known-good state.” Small changes. Atomic commits. Verification at every step.

It turns out that the principles that make human software teams effective apply directly to human-AI teams. Who would have thought.

The Books Nobody Had Time to Read

And here’s an unexpected side effect.

Once I started building skills and writing context documents, I found myself reaching for books I’d always meant to read but never had time for. Eric Evans’ Domain-Driven Design. Gene Kim’s The Unicorn Project. Martin Fowler’s refactoring patterns. The classic engineering texts that have been sitting on shelves for decades.

Why now? Because AI made them relevant in a way they hadn’t been before.

Domain-Driven Design talks about “Knowledge Crunching,” the iterative process of extracting domain knowledge through deep conversations between developers and domain experts. That’s exactly what writing a PROJECT.md is. Distilling what you know about your domain into a form that a collaborator can use. Except now the collaborator is a machine.

The old wisdom about clean architecture, about separation of concerns, about naming things well. All of it matters more when an AI agent is reading your code. The AI has no institutional memory, no water-cooler knowledge, no “oh, everyone knows that module is a mess.” It reads what’s there. If your architecture is clear, the AI produces better output. If it’s muddy, so is everything the AI builds on top of it.

A video I came across put it perfectly: “The domain model and the insights into what concepts are and how they are related is what persists. Code is merely an expression of it.” That was said about DDD, a book from 2003. It reads like a manifesto for AI-assisted development in 2026.

And it’s not just me. I’ve noticed that experienced engineers, the ones who’ve been around long enough to have those books on their shelves, are having a quiet renaissance. The knowledge that was always theoretically important but practically sidelined by meeting culture and sprint ceremonies? Suddenly it’s the only thing that matters. Because the AI handles the typing. What remains is the thinking. And the thinking was always in those books.

Nobody had time to do architecture properly when half the day was meetings. Now the AI does the implementation, and you’re left with the parts the books were always about: understanding the domain, making structural decisions, encoding intent.

From One Developer to a Thousand Agents

One more thing. And this is where it scales beyond the personal.

Everything I’ve described so far is one developer, one AI, one project. But the principles are fractal.

Steve Yegge, the legendary engineer who’s been building systems at Amazon, Google, and Grab for decades, built something called Gas Town. It’s an orchestrator that runs 20 to 30 Claude Code instances simultaneously. Each one is an agent with its own context, its own tasks, its own session memory. They work in parallel on different parts of a codebase, and the system coordinates their efforts.

What’s fascinating is that Gas Town uses exactly the same architecture that GSD does for a single developer. Project documents for shared context. State files for continuity. Specialized roles for different types of work. What works for one developer and one AI scales to one developer and thirty AIs.

McKinsey is targeting agent-to-human parity across their firm by the end of 2026. Some AI-native startups already have more active agents than people on their payroll. The scaling question isn’t “can it work?” anymore. It’s “how fast?”

The markdown files I write for my personal projects, PROJECT.md, ROADMAP.md, STATE.md, are the same building blocks that power industrial agent coordination. Same structure. Same principles. Just more of them.

What Comes Next

So here we are. The brain has arms (MCP). The brain has knowledge (CLAUDE.md). The brain has capabilities and memory (Skills + GSD). What it produces is more consistent than before. More structured. More predictable. But not deterministic. The AI is still a probabilistic system, and consistency without a tighter harness only goes so far. That’s a topic for a future post.

It’s a capable collaborator. Reliable within the boundaries you set, and entirely dependent on the instructions you give it.

But there’s something missing.

Everything I’ve described is configuration. Documents. Instructions. Rules. The AI follows them. Precisely. Consistently. Exactly as specified.

What it doesn’t have is judgment. It doesn’t have taste. It doesn’t know when to break the rules because the situation demands it. It doesn’t have a sense of what this project is about beyond what’s written in the documents.

It doesn’t have a soul.

And that, it turns out, is the next frontier. Not giving the machine more capability. Giving it identity. Values. Priorities. A sense of who it is, not just what it should do.

In the next post: agents get souls. And things get very personal.